In the world of research, the marker p < 0.05 has become almost shorthand for “we found something meaningful”. Yet, it is not sufficient on its own. As a professional in research & report consulting, we see many studies relying solely on p-values — ignoring what actually matters: effect sizes, confidence intervals, data quality, and practical relevance.

The over-reliance on p-values

- Many researchers report “this result is significant (p < 0.05)” and stop there, assuming meaning and impact.

- But a low p-value only tells us that, under a specific statistical model, the observed data would be unlikely if the null hypothesis were true. ASA

- It does not tell us how large the effect is, whether it is practically relevant, or whether the study design and context justify the conclusion. PMC

- For example: a huge sample size can make a trivial difference reach p < 0.05 — yet that difference might be irrelevant in real-world terms.

Why this matters

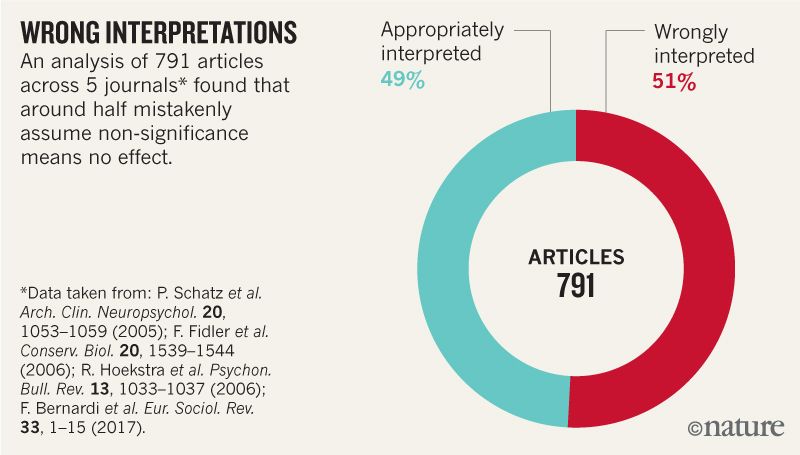

- When p-values are treated as the gatekeeper of “success”, we get:

- Selective publishing of “significant” results (file-drawer problem)

- p-hacking and data-dredging to achieve p < 0.05

- Misinterpretation: “Statistical significance = real world importance” (false)

- These issues contribute to the reproducibility crisis in many scientific fields. Columbia Statistics

Introducing the 2016 American Statistical Association (ASA) Statement on P-Values

In March 2016, the ASA released a landmark statement to define how p-values should be used — and what they are not. ASA

Among the six core principles:

- P-values can indicate how incompatible the data are with a specified model. Qi Statistics

- P-values do not measure the probability that the hypothesis is true or that the data were produced by random chance alone. PMC

- Scientific conclusions and policy decisions should not be based solely on whether a p-value passes some threshold. Taylor & Francis Online

- A p-value, or statistical significance, does not measure the size of an effect or its practical importance. Department of Statistics

- Proper inference requires full reporting and transparency (including all analyses, methods, data selection). ASA

- By itself, a p-value does not provide a good measure of evidence regarding a model or hypothesis. CrossFit

Thus the ASA urged the scientific community: move into a “post p < 0.05 era”. ASA

What researchers should consider beyond p-values

✅ Effect size

Indicates how much difference or relationship exists. A small effect, even if “statistically significant”, may be irrelevant.

✅ Confidence intervals (or credible intervals)

These show the range within which the true effect likely lies. A p < 0.05 result with a very wide interval may still be highly uncertain.

✅ Practical relevance/context

Ask: Does this matter in real-world terms? Is the magnitude meaningful? Does the study design support claims?

✅ Study design, measurement quality, sample size

A large sample can detect trivial differences; a small sample may miss meaningful ones. p-values don’t account for design faults.

✅ Alternative/integrative approaches

- Bayesian methods: afford probability statements about hypotheses.

- Likelihood ratios, Bayes factors: more nuanced evidence metrics.

- Focus on estimation and prediction rather than “significance testing”. Columbia Statistics

Why your research & report consulting firm matters

At Research & Report Consulting, we specialise in guiding researchers through these issues:

- Moving beyond stars and thresholds to meaningful interpretation.

- Translating results into effect sizes, confidence intervals, and practical implications.

- Ensuring transparency, reproducibility and integrity in reporting.

Our mission: empower you to make data-driven, context-rich, high-impact conclusions — not just “significant” p-values.

We help you interpret results with integrity — beyond just stars and thresholds.

Reach out to explore how we can elevate your research validity and impact.

What’s the most surprising “significant but meaningless” result you’ve encountered in your research — and how did you dig deeper? Let’s start a discussion below.

Want research service from Research & Report experts? Please contact us.