In research and consulting practice, we often observe a common yet critical error: using measurement scales before ensuring the concept is fully defined. At Research & Report Consulting, we witness it time and time again — and the consequences are serious: data that misleads, reports that misinform, and decisions that rest on sand.

Why It Happens

- Researchers are eager to move into data-collection and run tools like SPSS or STATA, so they skip the conceptual-definition phase.

- They adopt ready-made measurement scales without verifying whether the underlying construct aligns with their study context.

- Operationalisation (turning a concept into measurable items) is treated as a mechanical step rather than a theoretical one — resulting in a concept-operationalisation mismatch.

The Core Problem: Concept-Operationalisation Mismatch

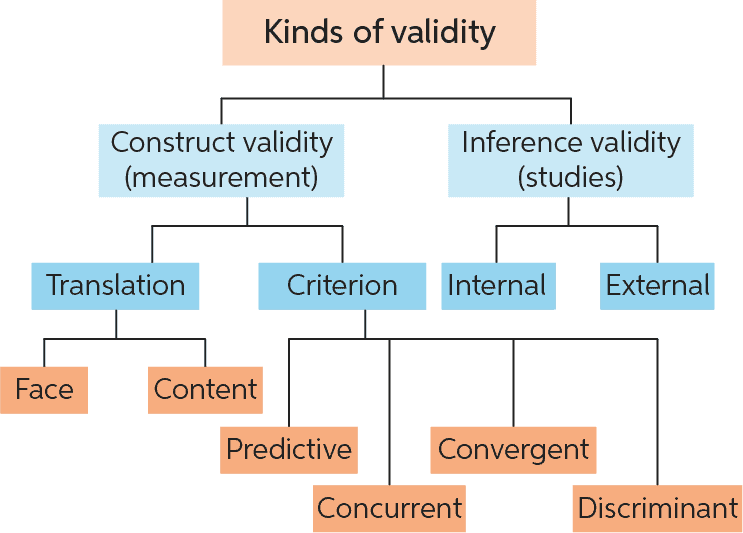

When a scale is used without clearly defining the construct it intends to measure, the result is often superficial reliability but weak construct validity. According to validity theory:

“Construct validity is about how well a test measures the concept it was designed to evaluate.”

It lies at the heart of measurement quality. If your operationalisation doesn’t map to your construct, you are measuring something else — or worse, nothing meaningful.

What that mismatch looks like

- You measure “job satisfaction” with items that actually tap “organizational support” or “employee mood.”

- You adopt an existing “innovation readiness” scale but your context defines innovation differently — leading to misalignment.

- You rely on high Cronbach’s alpha and move on — ignoring that reliability alone does not guarantee validity.

Construct Validity: The Guardrail

Construct validity demonstrates that your instrument measures what it ought to. It involves:

- Convergent validity — your measure correlates strongly with other established measures of the same construct. Questionmark Online Assessment

- Discriminant validity — your measure does not correlate strongly with measures of unrelated constructs. Questionmark Online Assessment Platform

- A solid theoretical network (often called a nomological network) underpinning how your construct relates to other variables. PMC

Why You Should Care (Beyond Academic Idealism)

- Poor decisions: When metrics don’t reflect the true concept, business or policy decisions based on those metrics may misfire.

- Wasted resources: Time and money spent on instrument development, data collection and analysis yield weak returns.

- Credibility risk: Research reports that fail to address construct-validity raise doubts about your methodology and findings.

At Research & Report Consulting: Our Approach

➡ We ensure conceptual clarity before you touch SPSS or STATA. Our workflow includes:

- Defining the construct: We work with the client to articulate what exactly is meant by the concept—its dimensions, boundaries, context.

- Mapping operationalisation: We design or verify measurement items, ensure alignment with the construct definition, and test for concept-item fit.

- Validating the measure: We advise on pilot testing, factor analysis, convergent/discriminant checks, and nomological mapping.

- Data-analysis preparation: Once construct alignment is confirmed, we proceed to guide in software implementation with confidence.

The above visuals help illustrate how validity types inter-relate.

Quick Reference – Checklist for Researchers

- Did you explicitly define your construct in theory and in your context?

- Does your operationalisation (items/scale) reflect exactly what the definition describes?

- Did you conduct or plan for convergent/discriminant validity testing?

- Are you confident that high reliability (e.g., Cronbach’s alpha) is not misleading you into assuming validity?

- Are you ready to analyse in SPSS/STATA only after these prior steps?

Final Thought & CTA

In measurement-driven research, the temptation is to rush to the software and crunch numbers. Yet the strongest research comes when theory is given its proper place. At Research & Report Consulting, we say:

“We ensure conceptual clarity before you touch SPSS or STATA.”

Because real measurement excellence begins long before data entry.

What’s your biggest challenge when operationalising a construct in your current research? Let’s discuss in the comments below.

References

- Bhandari, P. (2022). Construct validity | Definition, Types, & Examples. Scribbr. Retrieved June 22 2023. Scribbr

- Questionmark. How to Measure Construct Validity. Retrieved from questionmark.com blog. Questionmark Online Assessment Platform

- SimplyPsychology. Construct Validity: Definition, Types & Examples. Retrieved last year. Simply Psychology

- Wikipedia. Multitrait–Multimethod Matrix. Wikipedia

- Wikipedia. Construct validity. Wikipedia

Want research service from Research & Report experts? Please contact us.